DSO: Direct Sparse Odometry

Contact: Jakob Engel, Prof. Vladlen Koltun, Prof. Daniel Cremers

Abstract

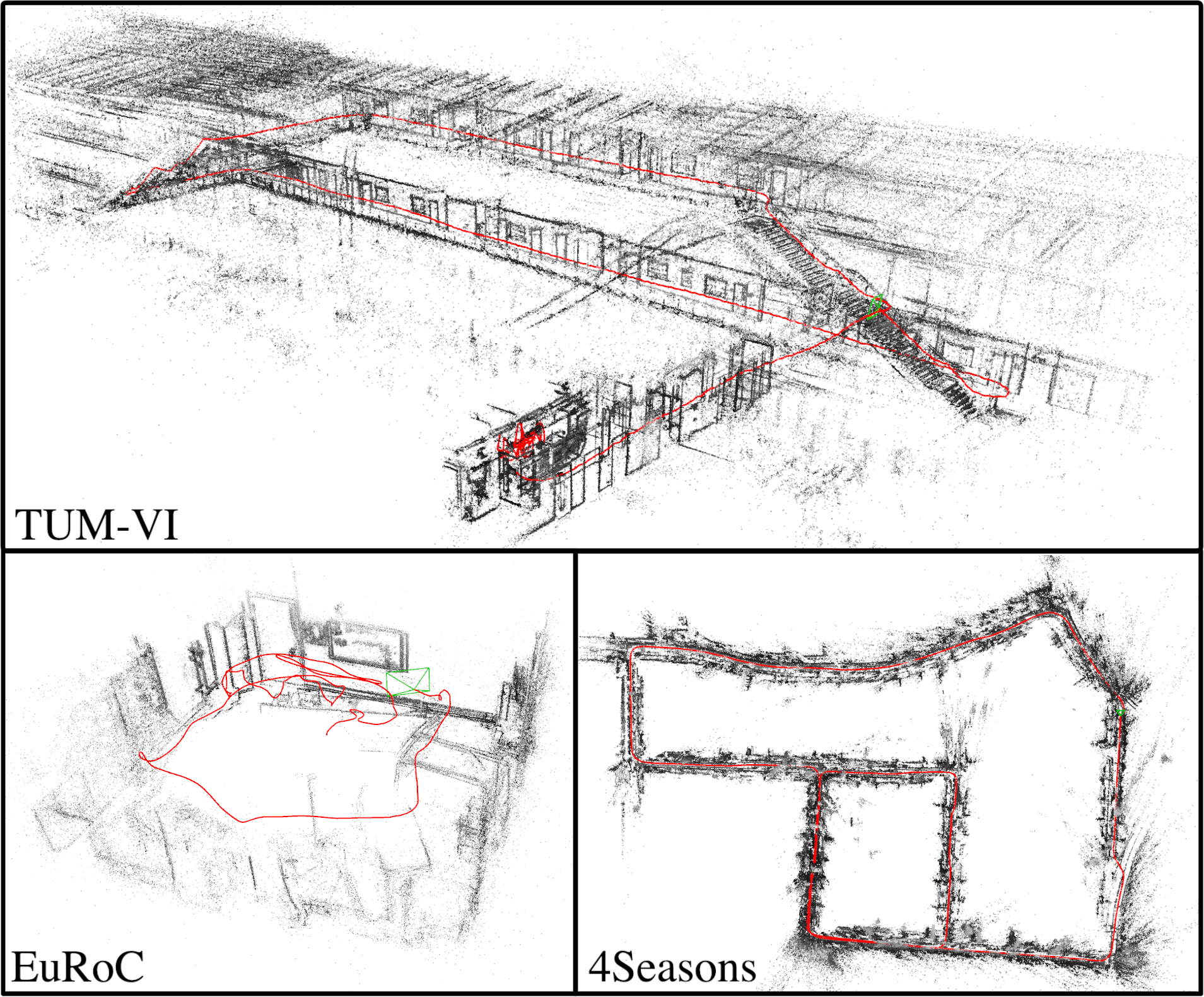

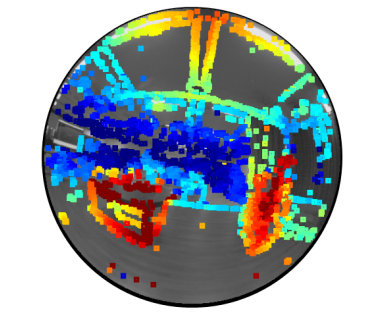

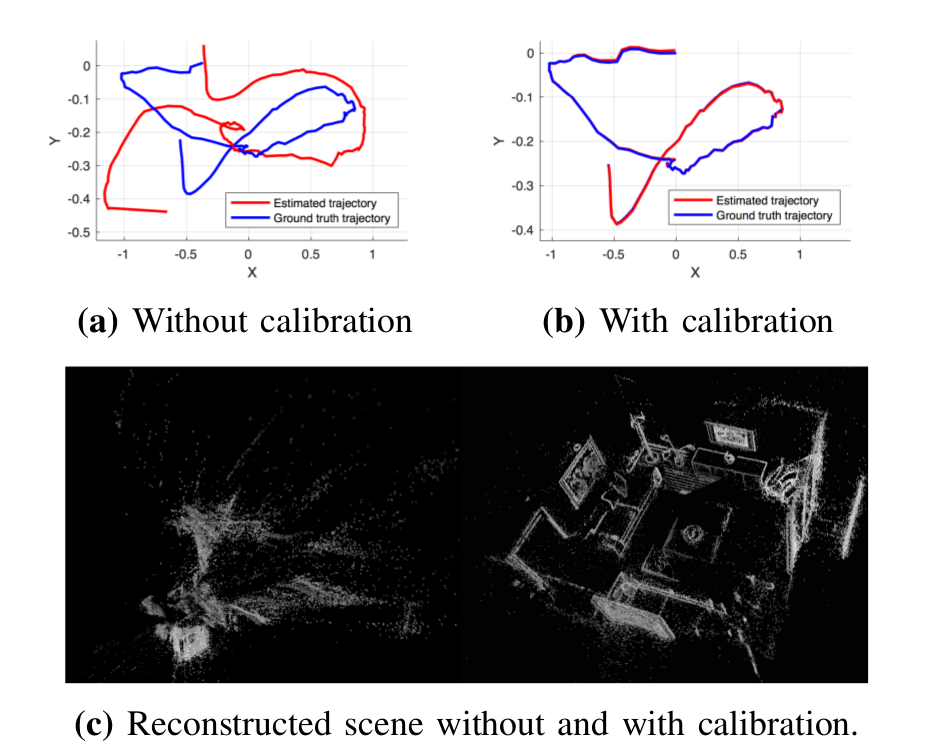

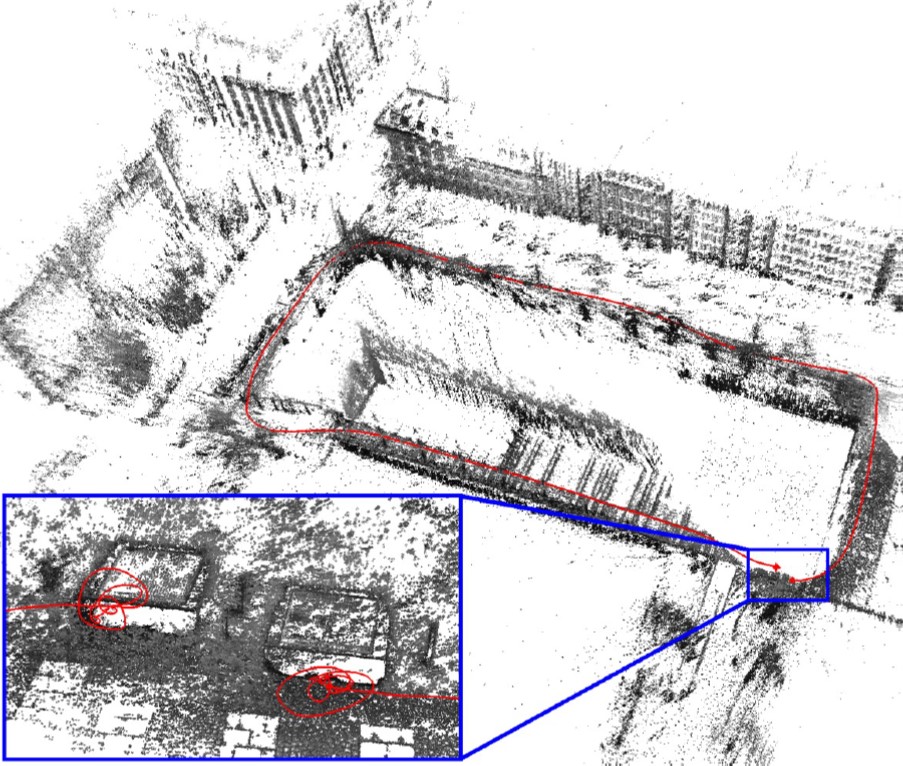

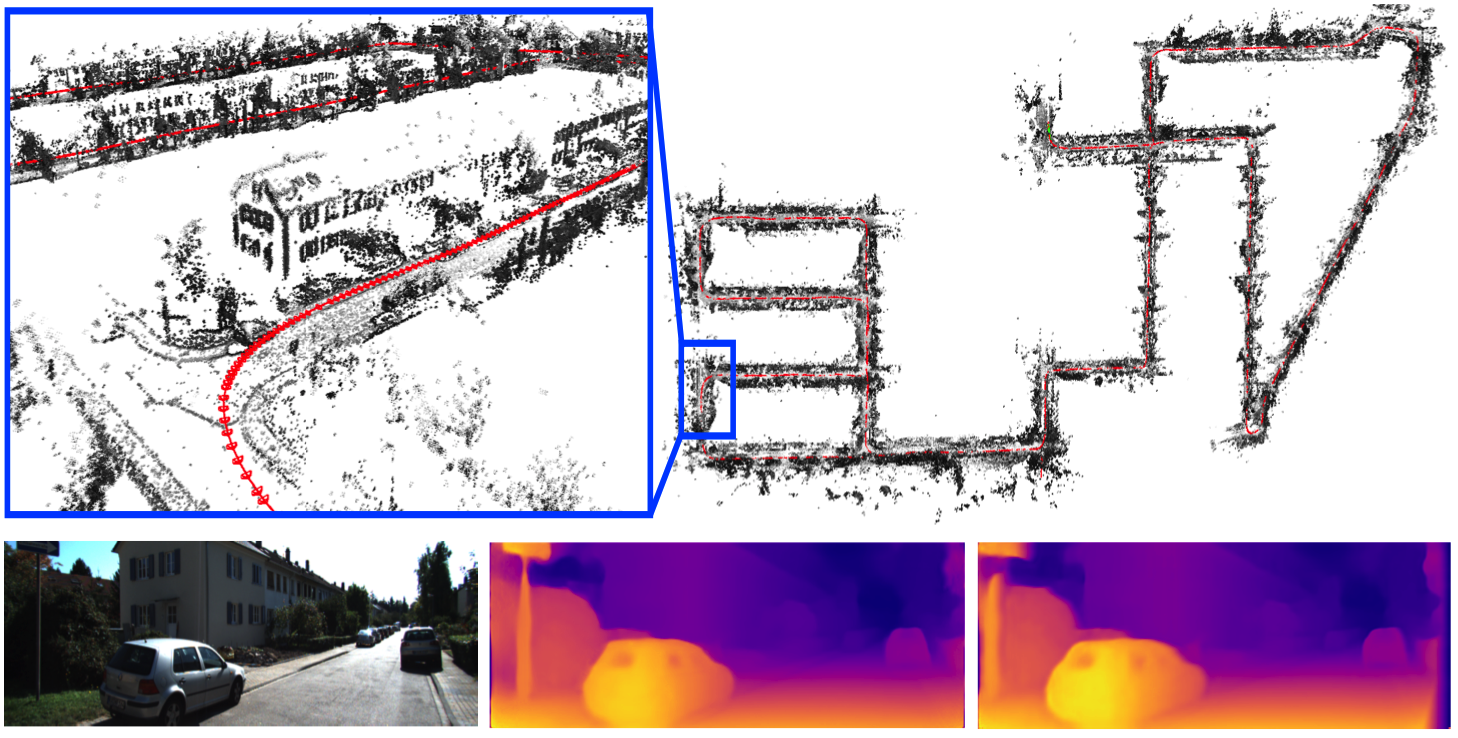

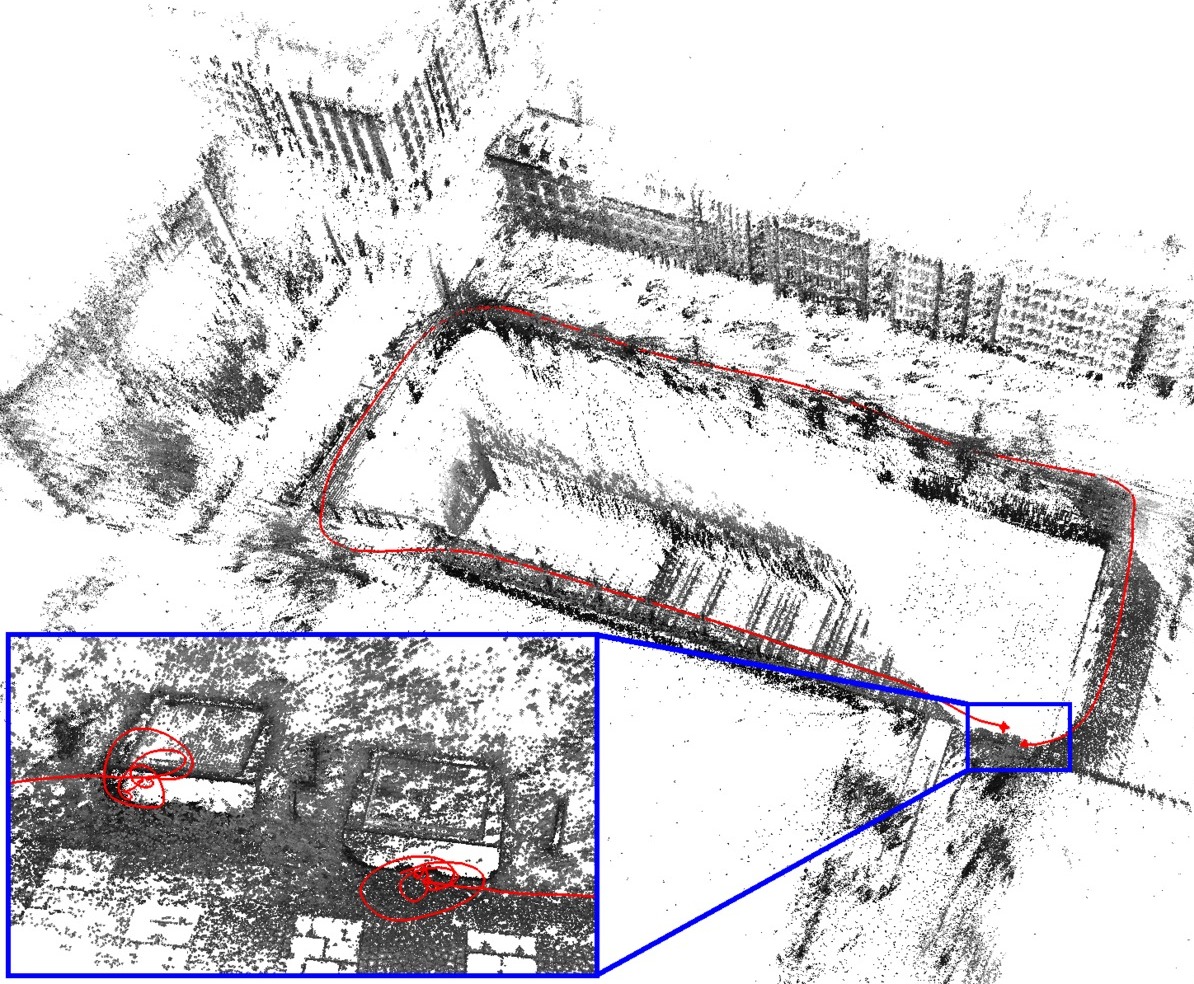

DSO is a novel direct and sparse formulation for Visual Odometry. It combines a fully direct probabilistic model (minimizing a photometric error) with consistent, joint optimization of all model parameters, including geometry - represented as inverse depth in a reference frame - and camera motion. This is achieved in real time by omitting the smoothness prior used in other direct methods and instead sampling pixels evenly throughout the images. DSO does not depend on keypoint detectors or descriptors, thus it can naturally sample pixels from across all image regions that have intensity gradient, including edges or smooth intensity variations on mostly white walls. The proposed model integrates a full photometric calibration, accounting for exposure time, lens vignetting, and non-linear response functions. We thoroughly evaluate our method on three different datasets comprising several hours of video. The experiments show that the presented approach significantly outperforms state-of-the-art direct and indirect methods in a variety of real-world settings, both in terms of tracking accuracy and robustness.

Datatset

Please see here for the TUM monoVO dataset, used for large parts of the evaluation and the above video. It contains over 2h of video and respective evaluation / benchmarking metrics / tools.

Supplementary Material

Supplementary material with all ORB-SLAM and DSO results presented in the paper can be downloaded from here: zip (2.7GB). We further provide ready-to-use Matlab scripts to reproduce all plots in the paper from the above archive, which can be downloaded here: zip (30MB)

14.10.2016.: We have updated the supplementary material with the fixed real-time results for ORB-SLAM, corresponding to the revised version of the papers.

Open-Source Code

The full source code is available on Github under GPLv3: https://github.com/JakobEngel/dso This main project is meant to run on datasets in the TUM monoVO dataset format (i.e., not with a live camera).

We also provide a minimalistic example (200 lines of c++ code) how to integrate DSO to work with a live camera, using ROS for video capture: https://github.com/JakobEngel/dso_ros. Feel free to your use-case / camera capture environment / ROS version.

Exclusive commercial rights for DSO are held by Artisense. For commercial licensing of DSO please contact licensing@artisense.ai.

Extensions

Publications

Export as PDF, XML, TEX or BIB

Journal Articles

2022

[]

DM-VIO: Delayed Marginalization Visual-Inertial Odometry , In IEEE Robotics and Automation Letters (RA-L) & International Conference on Robotics and Automation (ICRA), volume 7, 2022. ([arXiv][video][project page][supplementary][code])

2018

[]

Omnidirectional DSO: Direct Sparse Odometry with Fisheye Cameras , In IEEE Robotics and Automation Letters & Int. Conference on Intelligent Robots and Systems (IROS), IEEE, 2018. ([arxiv])

[]

Online Photometric Calibration of Auto Exposure Video for Realtime Visual Odometry and SLAM , In IEEE Robotics and Automation Letters (RA-L), volume 3, 2018. (This paper was also selected by ICRA'18 for presentation at the conference.[arxiv][video][code][project])

ICRA'18 Best Vision Paper Award - Finalist []

Direct Sparse Odometry , In IEEE Transactions on Pattern Analysis and Machine Intelligence, 2018.

Conference and Workshop Papers

2021

[]

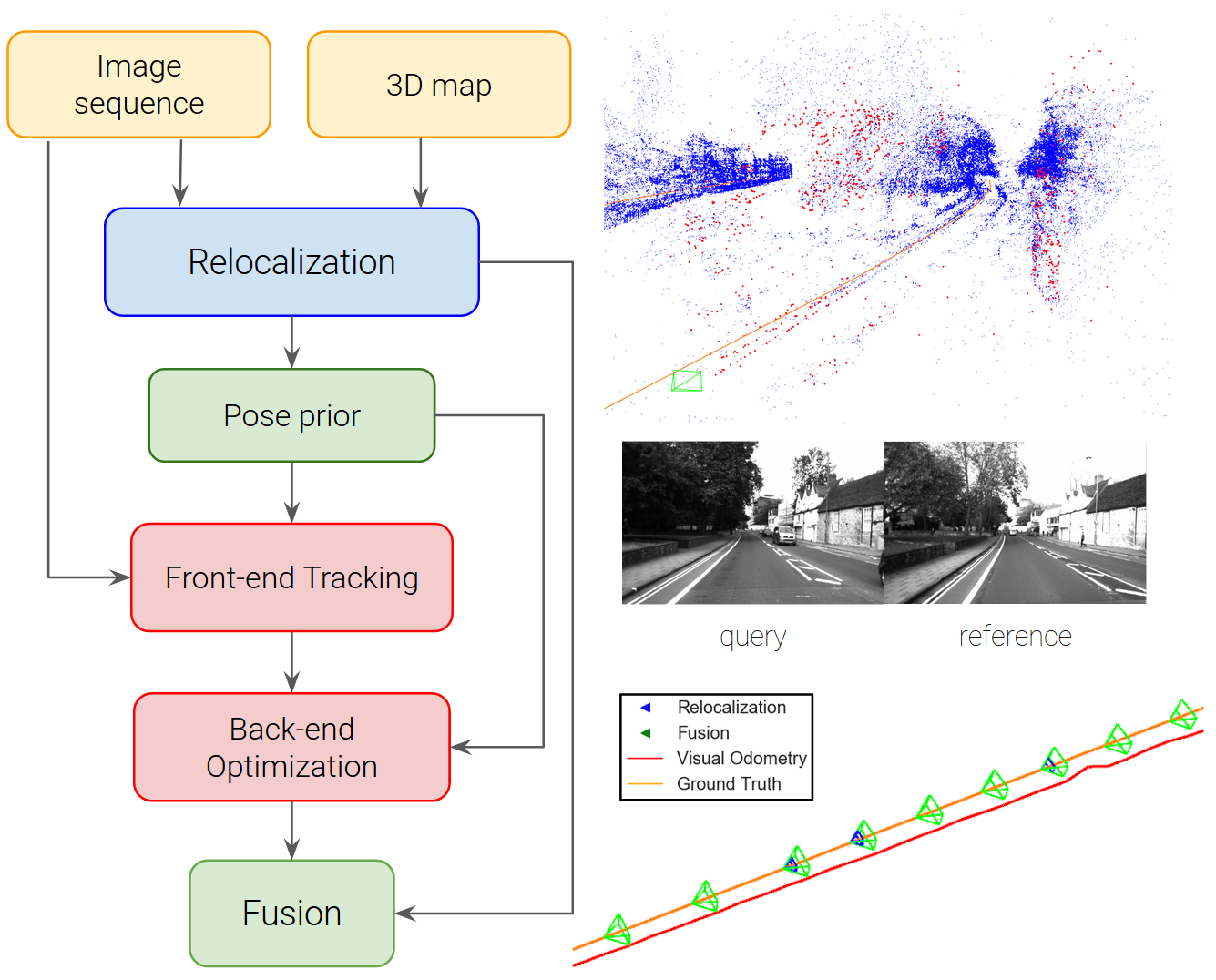

Tight Integration of Feature-based Relocalization in Monocular Direct Visual Odometry , In Proc. of the IEEE International Conference on Robotics and Automation (ICRA), 2021. ([project page])

2020

[]

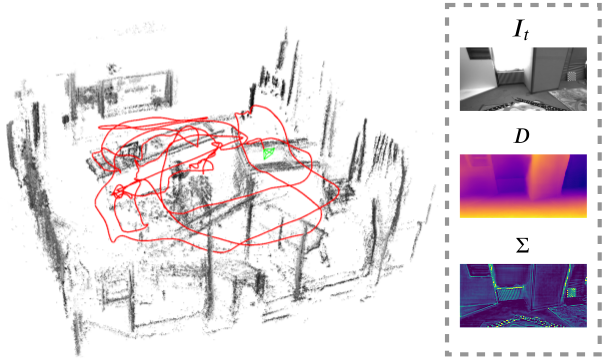

D3VO: Deep Depth, Deep Pose and Deep Uncertainty for Monocular Visual Odometry , In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

Oral Presentation

2019

[]

Rolling-Shutter Modelling for Visual-Inertial Odometry , In International Conference on Intelligent Robots and Systems (IROS), 2019. ([arxiv])

2018

[]

Direct Sparse Odometry With Rolling Shutter , In European Conference on Computer Vision (ECCV), 2018. ([supplementary][arxiv])

Oral Presentation []

Deep Virtual Stereo Odometry: Leveraging Deep Depth Prediction for Monocular Direct Sparse Odometry , In European Conference on Computer Vision (ECCV), 2018. ([arxiv],[supplementary],[project])

Oral Presentation []

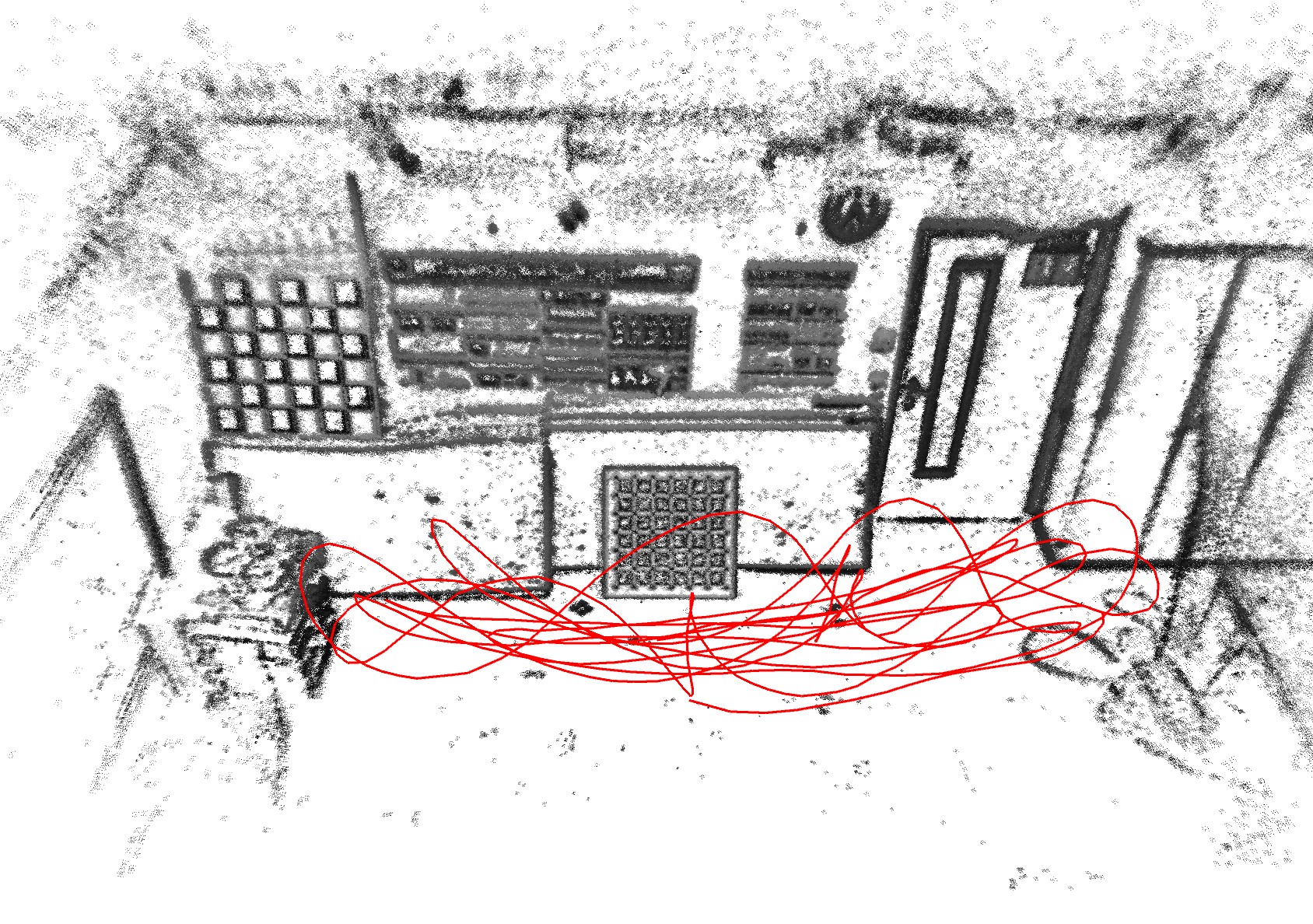

LDSO: Direct Sparse Odometry with Loop Closure , In International Conference on Intelligent Robots and Systems (IROS), 2018. ([arxiv][video][code][project])

[]

Direct Sparse Visual-Inertial Odometry using Dynamic Marginalization , In International Conference on Robotics and Automation (ICRA), 2018. ([supplementary][video][arxiv])

2017

[]

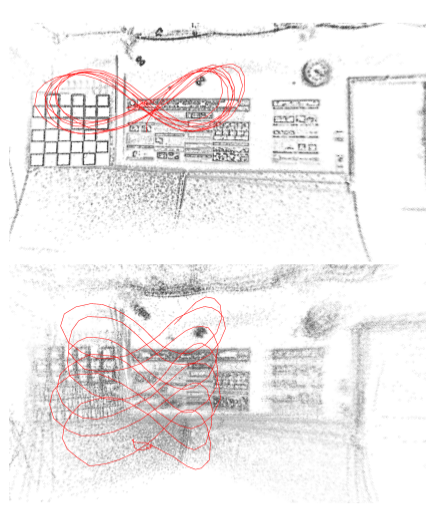

Stereo DSO: Large-Scale Direct Sparse Visual Odometry with Stereo Cameras , In International Conference on Computer Vision (ICCV), 2017. ([supplementary][video][arxiv][project])

2016

[]

Direct Sparse Odometry , In arXiv:1607.02565, 2016.

[]

A Photometrically Calibrated Benchmark For Monocular Visual Odometry , In arXiv:1607.02555, 2016.