VI-DSO: Direct Sparse Visual-Inertial Odometry using Dynamic Marginalization

Contact: Lukas von Stumberg, usenko, Prof. Daniel Cremers

Abstract

We present VI-DSO, a novel approach for visual-inertial odometry, which jointly estimates camera poses and sparse scene geometry by minimizing photometric and IMU measurement errors in a combined energy functional. The visual part of the system performs a bundle-adjustment like optimization on a sparse set of points, but unlike key-point based systems it directly minimizes a photometric error. This makes it possible for the system to track not only corners, but any pixels with large enough intensity gradients. IMU information is accumulated between several frames using measurement preintegration, and is inserted into the optimization as an additional constraint between keyframes. We explicitly include scale and gravity direction into our model and jointly optimize them together with other variables such as poses. As the scale is often not immediately observable using IMU data this allows us to initialize our visual-inertial system with an arbitrary scale instead of having to delay the initialization until everything is observable. We perform partial marginalization of old variables so that updates can be computed in a reasonable time. In order to keep the system consistent we propose a novel strategy which we call "dynamic marginalization". This technique allows us to use partial marginalization even in cases where the initial scale estimate is far from the optimum. We evaluate our method on the challenging EuRoC dataset, showing that VI-DSO outperforms the state of the art.

Downloads

The paper can be downloaded at: http://arxiv.org/abs/1804.05625

There is also supplementary material with additional evaluation and mathematical derivations at: vi-dso-supplementary-material.pdf.

The video is available at: https://youtu.be/GoqnXDS7jbA

The project is based on DSO which was developed by Jakob Engel.

Export as PDF, XML, TEX or BIB

Journal Articles

2022

[]

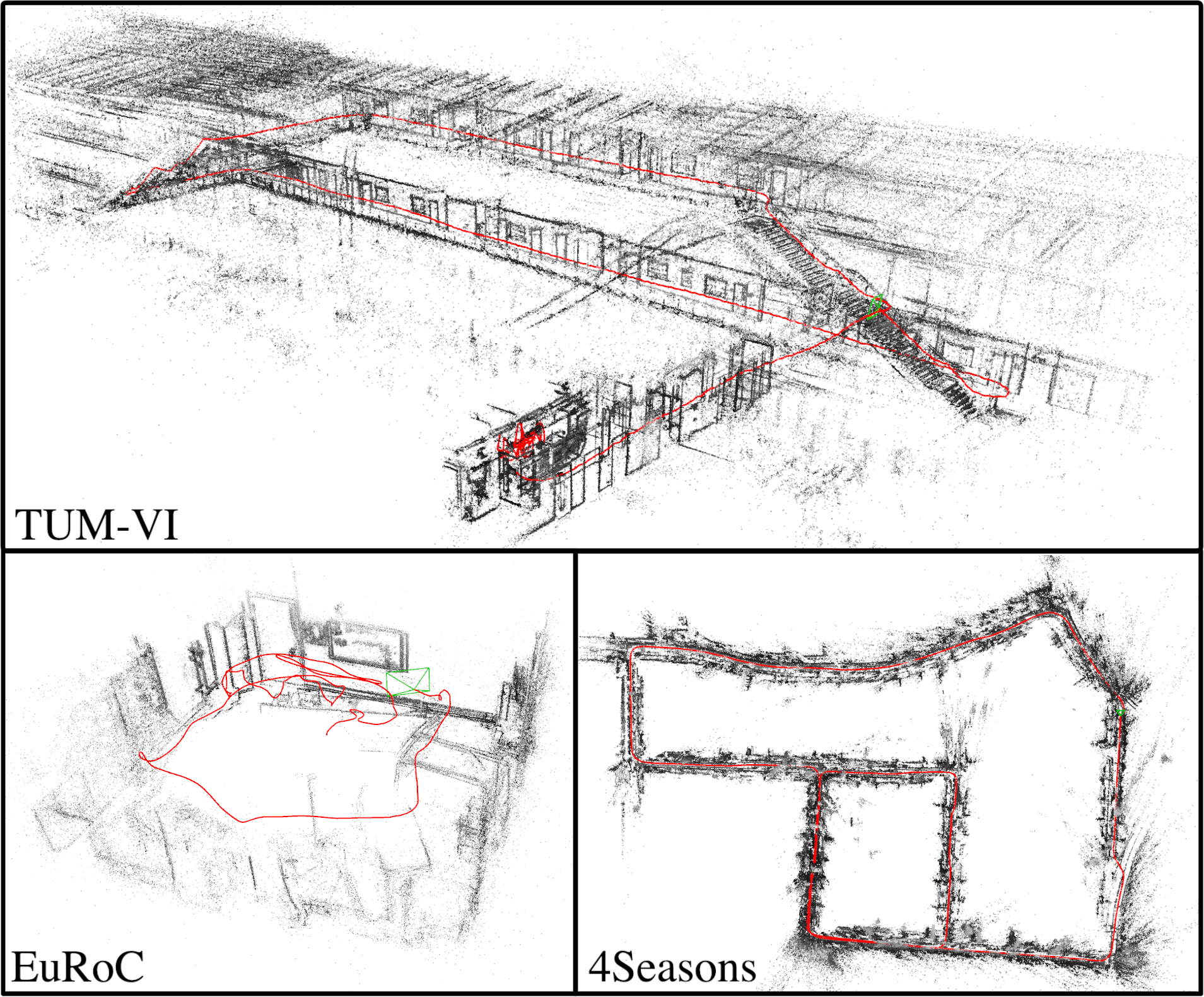

DM-VIO: Delayed Marginalization Visual-Inertial Odometry , In IEEE Robotics and Automation Letters (RA-L) & International Conference on Robotics and Automation (ICRA), volume 7, 2022. ([arXiv][video][project page][supplementary][code])

Conference and Workshop Papers

2018

[]

Direct Sparse Visual-Inertial Odometry using Dynamic Marginalization , In International Conference on Robotics and Automation (ICRA), 2018. ([supplementary][video][arxiv])