Nan Yang

PhD student

Technical University of MunichSchool of Computation, Information and Technology

Informatics 9

Boltzmannstrasse 3

85748 Garching

Germany

Fax: +49-89-289-17757

Office:

Mail: yangn@in.tum.de

>>Personal Website<<

- [12.2021] TANDEM won the Best Demo Award at 3DV 2021!

- [05.2021] Received Outstanding Reviewer for CVPR 2021.

- [04.2021] 4Seasons dataset is now public.

- [12.2020] Finished the internship at Facebook Reality Labs where I worked on collaborative mapping.

- [10.2020] LM-Reloc accepted at 3DV 2020.

- [09.2020] Started the internship at Facebook Reality Labs.

- [05.2020] Co-organized Map-based Localization for Autonomous Driving Workshop, ECCV 2020.

- [02.2020] D3VO accepted as an oral presentation at CVPR 2020.

Brief Bio

Find me on Google Scholar, Linkedin, Twitter.

I received my Bachelor's degree in Computer Science from Beijing University of Posts and Telecommunications and my Master's degree in Informatics from the Technical University of Munich. Since May 2018, I am a Ph.D. student and senior computer vision researcher in Artisense, a startup co-founded by Prof. Daniel Cremers. From September 2020 until December 2020, I was an intern in Facebook Reality Labs working on collaborative mapping.

Research

My research interest lies in enhancing classical 3D vision, e.g., visual odometry / simultaneously localization and mapping (SLAM), re-localization, and dense reconstruction, with the aid of deep neural networks. Here are some selected projects:

Visual Odometry

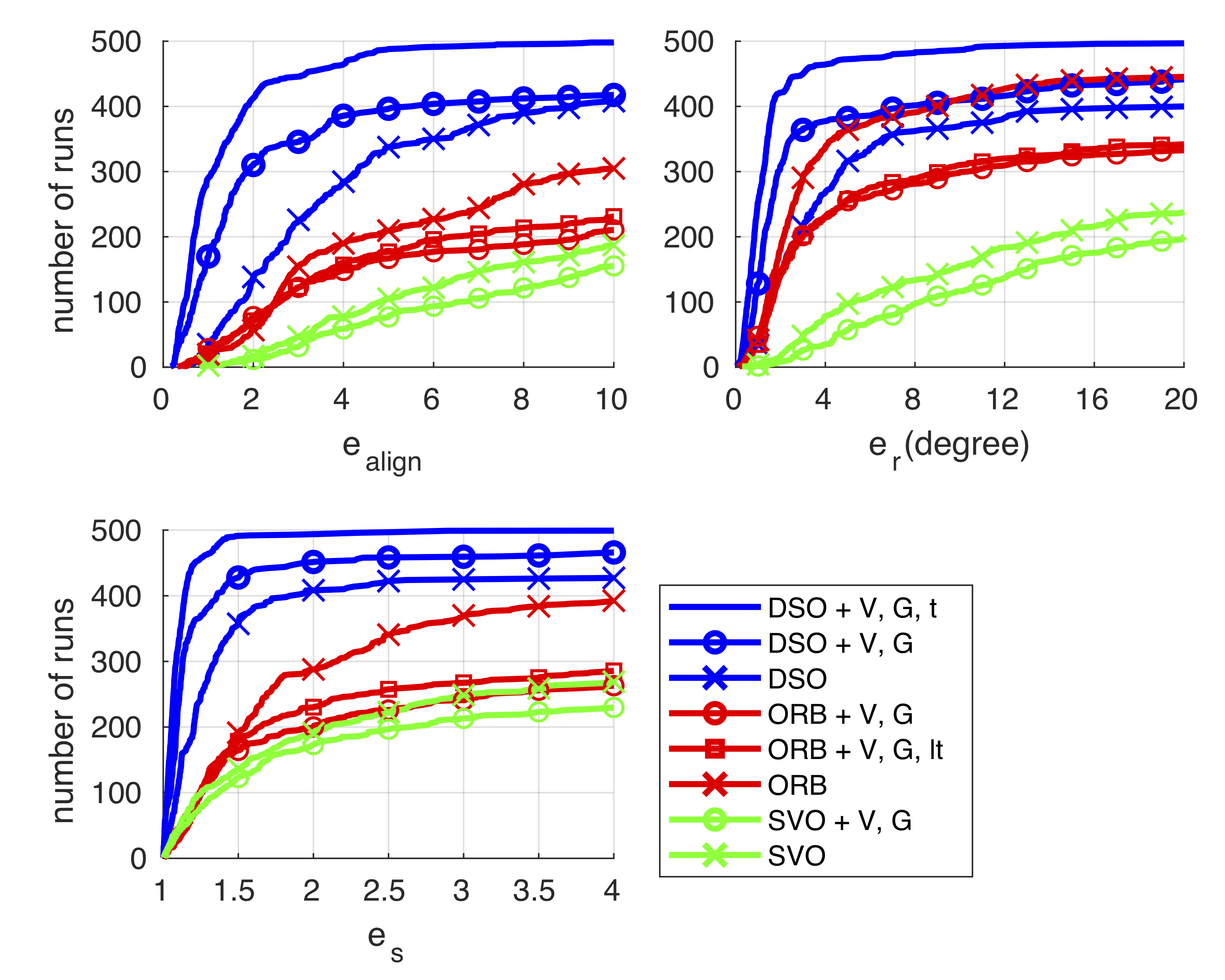

- Deep Virtual Stereo Odometry: Leveraging Deep Depth Prediction for Monocular Direct Sparse Odometry, ECCV 2018, Oral Presentation.

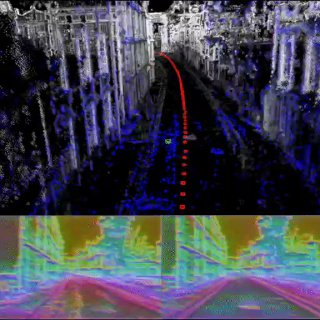

- D3VO: Deep Depth, Deep Pose and Deep Uncertainty for Monocular Visual Odometry, CVPR 2020, Oral Presentation.

- Multi-Frame GAN: Image Enhancement for Stereo Visual Odometry in Low Light , CoRL 2019, Long Oral Presentation.

Dense Reconstruction

- MonoRec: Semi-Supervised Dense Reconstruction in Dynamic Environments from a Single Moving Camera, CVPR 2021.

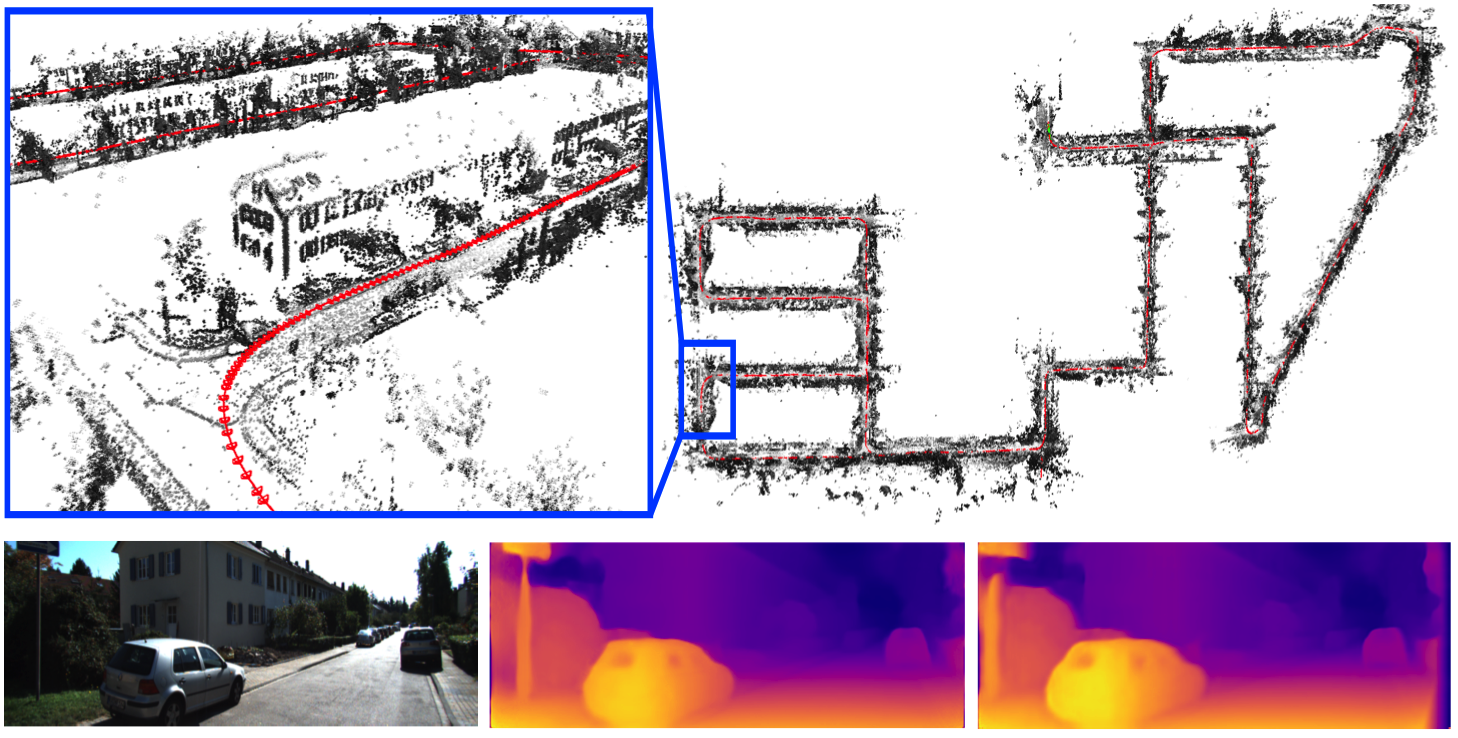

- *TANDEM: Tracking and Dense Mapping in Real-time using Deep Multi-view Stereo*, CoRL 2021, Best Demo Award at 3DV 2021.

Re-localization

- LM-Reloc: Levenberg-Marquardt Based Direct Visual Relocalization, 3DV 2020.

Object-level Perceptions

- DirectShape: Photometric Alignment of Shape Priors for Visual Vehicle Pose and Shape Estimation, ICRA 2020.

- Learning Monocular 3D Vehicle Detection without 3D Bounding Box Labels, GCPR 2020.

Professional Services

- Journal reviewer: RA-L, AURO, ISPRS

- Conference reviewer: CVPR, ECCV, ICCV, ICLR, AAAI, ICRA, IROS

Publications

Export as PDF, XML, TEX or BIB

Journal Articles

2018

[]

Challenges in Monocular Visual Odometry: Photometric Calibration, Motion Bias and Rolling Shutter Effect , In In IEEE Robotics and Automation Letters (RA-L) & Int. Conference on Intelligent Robots and Systems (IROS), volume 3, 2018. ([arxiv])

Preprints

2022

[]

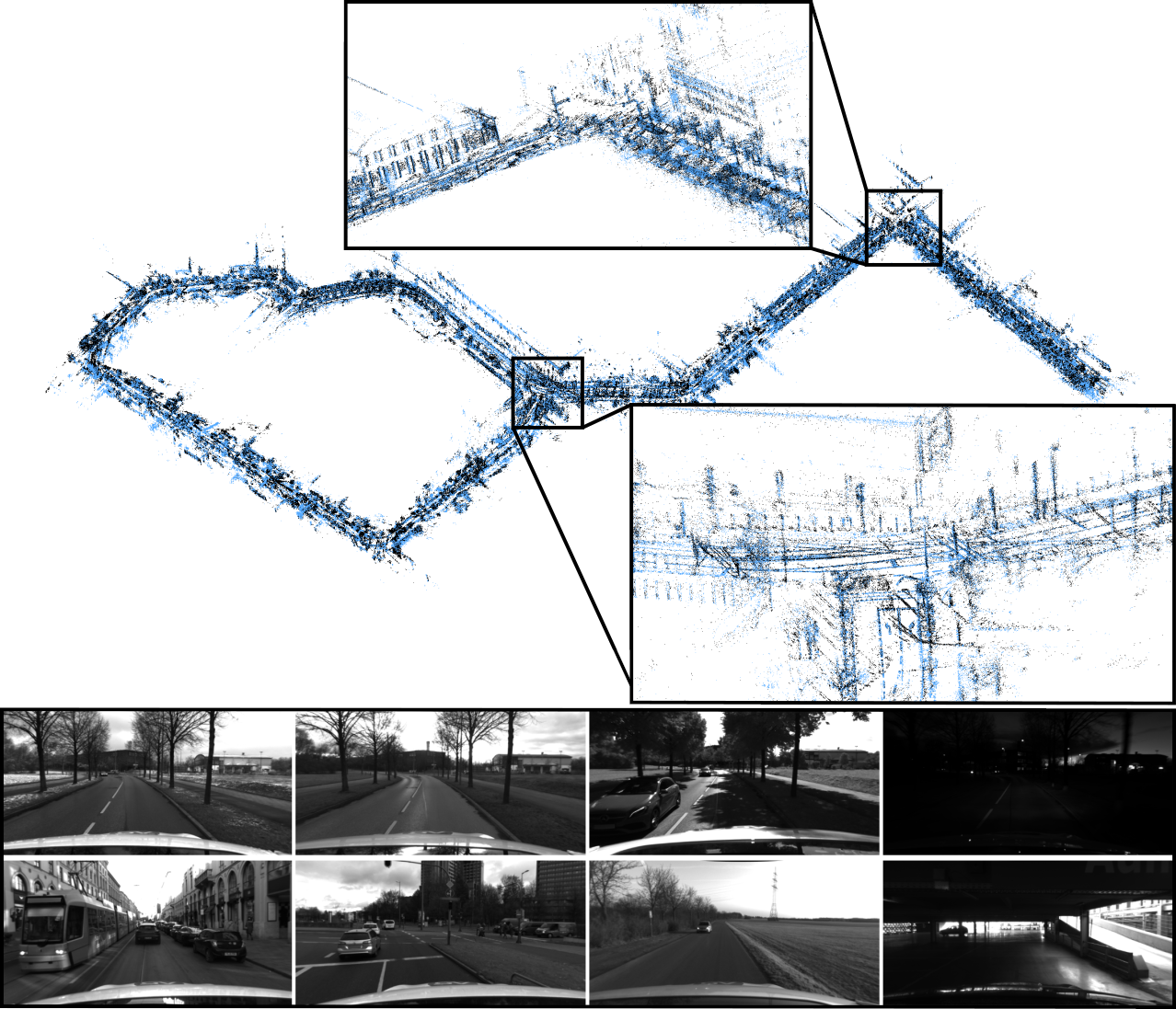

4Seasons: Benchmarking Visual SLAM and Long-Term Localization for Autonomous Driving in Challenging Conditions , In arXiv preprint arXiv:2301.01147, 2022.

Conference and Workshop Papers

2024

[] FIRe: Fast Inverse Rendering Using Directional and Signed Distance Functions , In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2024. ([Project page],[ArXiv])

2023

[]

Behind the Scenes: Density Fields for Single View Reconstruction , In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023. ([project page])

2021

[]

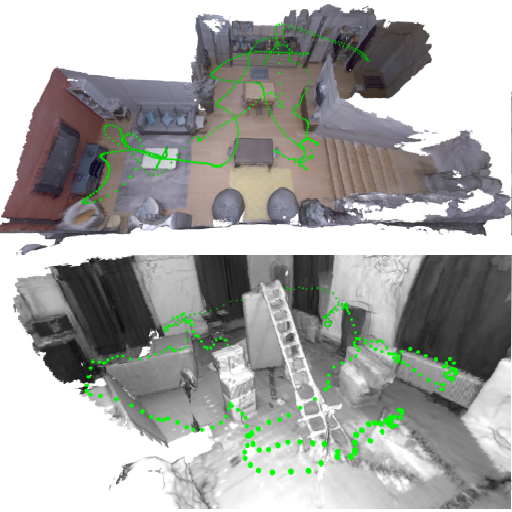

TANDEM: Tracking and Dense Mapping in Real-time using Deep Multi-view Stereo , In Conference on Robot Learning (CoRL), 2021. ([GitHub][video][project page])

3DV'21 Best Demo Award []

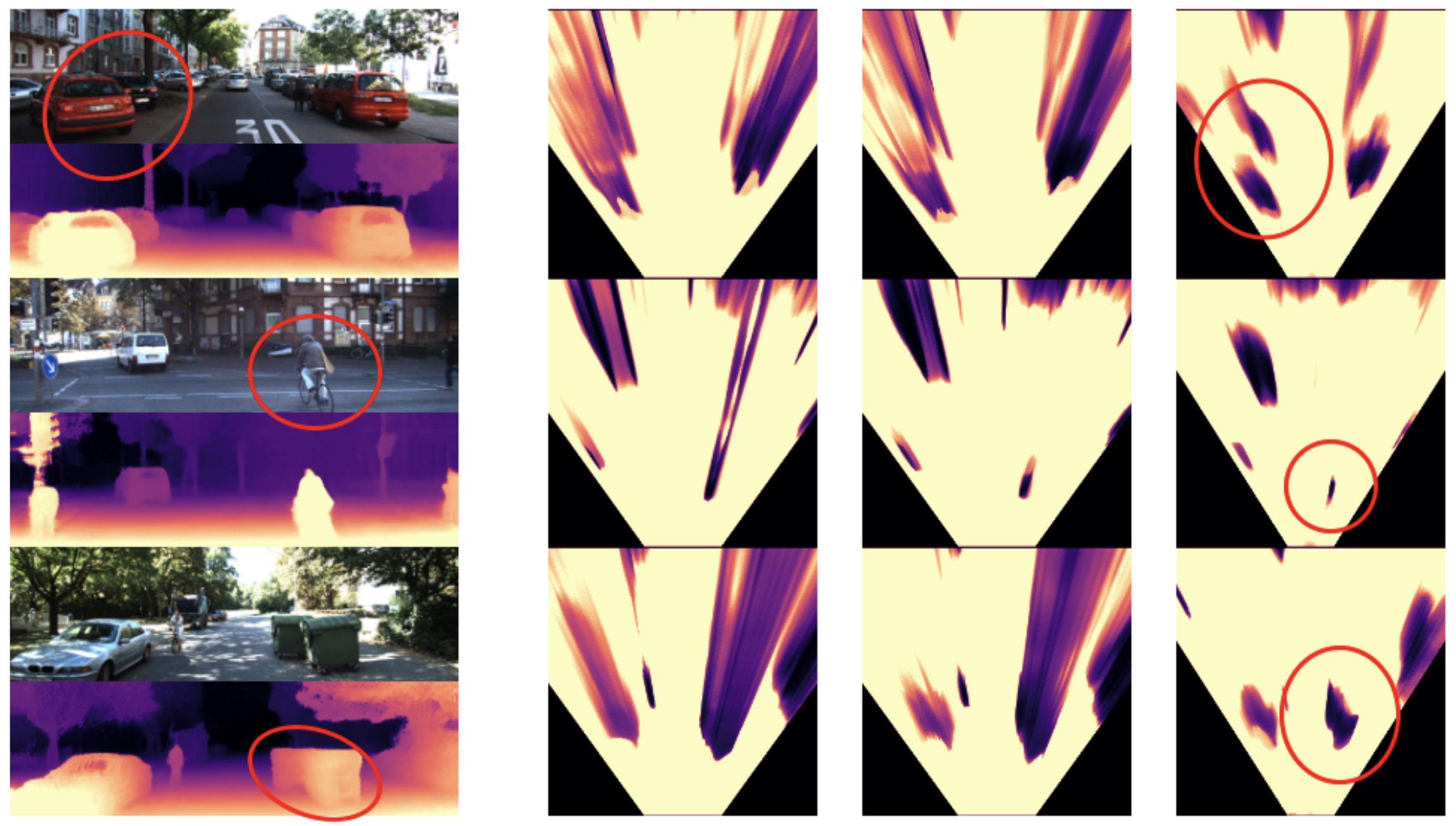

MonoRec: Semi-Supervised Dense Reconstruction in Dynamic Environments from a Single Moving Camera , In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2021. ([project page])

2020

[]

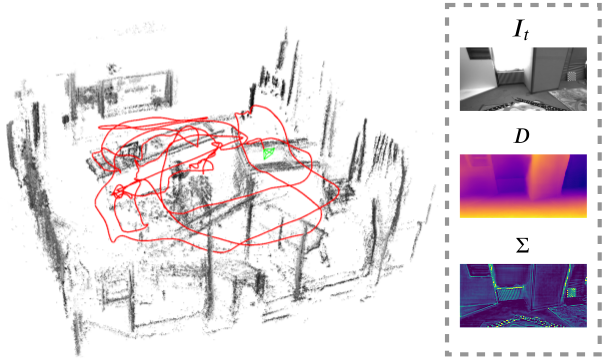

LM-Reloc: Levenberg-Marquardt Based Direct Visual Relocalization , In International Conference on 3D Vision (3DV), 2020. ([arXiv][project page][video][supplementary][poster])

[]

4Seasons: A Cross-Season Dataset for Multi-Weather SLAM in Autonomous Driving , In Proceedings of the German Conference on Pattern Recognition (GCPR), 2020. ([project page][arXiv][video])

[]

Learning Monocular 3D Vehicle Detection without 3D Bounding Box Labels , In Proceedings of the German Conference on Pattern Recognition (GCPR), 2020. ([project page][video])

[]

D3VO: Deep Depth, Deep Pose and Deep Uncertainty for Monocular Visual Odometry , In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

Oral Presentation []

DirectShape: Photometric Alignment of Shape Priors for Visual Vehicle Pose and Shape Estimation , In Proc. of the IEEE International Conference on Robotics and Automation (ICRA), 2020. ([video][presentation][project page][supplementary][arxiv])

2019

[]

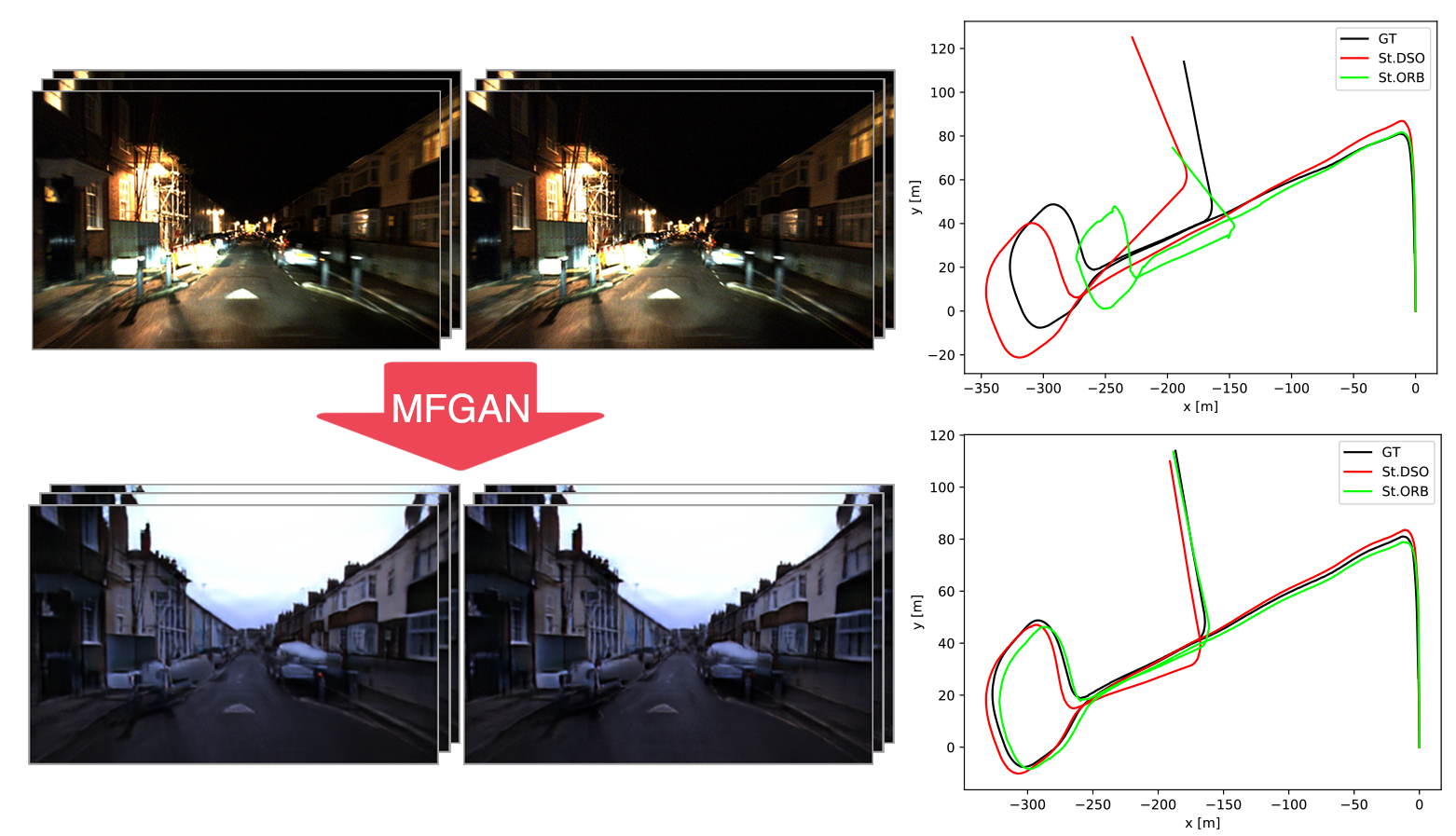

Multi-Frame GAN: Image Enhancement for Stereo Visual Odometry in Low Light , In Conference on Robot Learning (CoRL), 2019. ([arxiv],[supplementary],[video])

Full Oral Presentation

2018

[]

Deep Virtual Stereo Odometry: Leveraging Deep Depth Prediction for Monocular Direct Sparse Odometry , In European Conference on Computer Vision (ECCV), 2018. ([arxiv],[supplementary],[project])

Oral Presentation