Double Sphere Camera Model

Contact : Vladyslav Usenko, Nikolaus Demmel.

Vision-based motion estimation and 3D reconstruction, which have numerous applications (e.g., autonomous driving, navigation systems for airborne devices and augmented reality) are receiving significant research attention. To increase the accuracy and robustness, several researchers have recently demonstrated the benefit of using large field-of-view cameras for such applications.

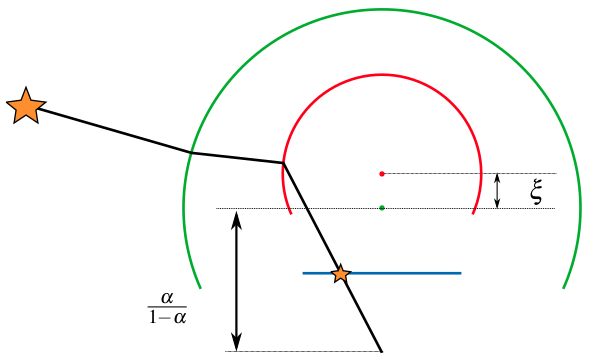

In this paper, we provide an extensive review of existing models for large field-of-view cameras. For each model we provide projection and unprojection functions and the subspace of points that result in valid projection. Then, we propose the Double Sphere camera model that well fits with large field-of-view lenses, is computationally inexpensive and has a closed-form inverse. We evaluate the model using a calibration dataset with several different lenses and compare the models using the metrics that are relevant for Visual Odometry, i.e., reprojection error, as well as computation time for projection and unprojection functions and their Jacobians. We also provide qualitative results and discuss the performance of all models.

Export as PDF, XML, TEX or BIB

Conference and Workshop Papers

2018

[]

The Double Sphere Camera Model , In Proc. of the Int. Conference on 3D Vision (3DV), 2018. ([arxiv])

Open-Source Calibration Tool

The open-source code for calibration is available here:

https://gitlab.com/VladyslavUsenko/basalt.

(GitHub mirror: https://github.com/VladyslavUsenko/basalt-mirror)

Follow the corresponding tutorial to see the examples on Euroc, TUM-VI and UZH dataset calibration sequences.

Datasets and Calibration Results

| Lens | Dataset 1 | Dataset 2 | Dataset 3 | |||

| BF2M2020S23 | BF2M2020S23-1.bag | results | BF2M2020S23-2.bag | results | BF2M2020S23-3.bag | results |

| BF5M13720 | BF5M13720-1.bag | results | BF5M13720-2.bag | results | BF5M13720-3.bag | results |

| BM4018S118 | BM4018S118-1.bag | results | BM4018S118-2.bag | results | BM4018S118-3.bag | results |

| BM2820 | BM2820-1.bag | results | BM2820-2.bag | results | BM2820-3.bag | results |

| GOPRO | GOPRO-1.bag | results | GOPRO-2.bag | results | GOPRO-3.bag | results |

| EUROC | EUROC.bag | results | ||||

Kalibr Example

We have contributed the implementation of Extended Unified Camera Model (EUCM) and Double Sphere Camera Model (DS) to open-source calibration tool Kalibr. See Supported Camera Models and examples below for details.

The example of multi-camera and IMU calibration for Euroc dataset is shown below (results):

#!/bin/bash

MODELS=(omni-none omni-radtan ds-none eucm-none pinhole-equi)

mkdir euroc

cd euroc

wget http://robotics.ethz.ch/~asl-datasets/ijrr_euroc_mav_dataset/calibration_datasets/imu_april/imu_april.bag

wget http://robotics.ethz.ch/~asl-datasets/ijrr_euroc_mav_dataset/calibration_datasets/imu_april/april_6x6.yaml

wget http://robotics.ethz.ch/~asl-datasets/ijrr_euroc_mav_dataset/calibration_datasets/cam_april/cam_april.bag

cat > imu.yaml <<- EOM

gyroscope_noise_density: 1.6968e-04

gyroscope_random_walk: 1.9393e-05

accelerometer_noise_density: 2.0000e-3

accelerometer_random_walk: 3.0000e-3

rostopic: /imu0

update_rate: 200.0

EOM

for m in "${MODELS[@]}"

do

mkdir ${m}

cd ${m}

kalibr_calibrate_cameras --bag ../cam_april.bag --topics /cam0/image_raw /cam1/image_raw --models ${m} ${m} --target ../april_6x6.yaml --bag-from-to 20 40 --dont-show-report > out_cam.txt 2>&1

kalibr_calibrate_imu_camera --target ../april_6x6.yaml --cam camchain-..cam_april.yaml --imu ../imu.yaml --bag ../imu_april.bag --dont-show-report > out_imu.txt 2>&1

cd ..

done

Another example of multi-camera and IMU calibration for TUM-VI dataset is shown below (results):

#!/bin/bash

MODELS=(omni-none omni-radtan ds-none eucm-none pinhole-equi)

mkdir tumvi

cd tumvi

wget https://vision.in.tum.de/_media/data/datasets/visual-inertial-dataset/april_6x6_80x80cm.zip

unzip april_6x6_80x80cm.zip

wget https://cdn3.vision.in.tum.de/tumvi/calibrated/512_16/dataset-calib-cam1_512_16.bag

wget https://cdn3.vision.in.tum.de/tumvi/calibrated/512_16/dataset-calib-imu3_512_16.bag

cat > imu.yaml <<- EOM

accelerometer_noise_density: 0.0028

accelerometer_random_walk: 0.00086

gyroscope_noise_density: 0.00016

gyroscope_random_walk: 0.000022

rostopic: /imu0

update_rate: 200.0

EOM

for m in "${MODELS[@]}"

do

mkdir ${m}

cd ${m}

kalibr_calibrate_cameras --bag ../dataset-calib-cam1_512_16.bag --topics /cam0/image_raw /cam1/image_raw --models ${m} ${m} --target ../april_6x6_80x80cm.yaml --dont-show-report > out_cam.txt 2>&1

kalibr_calibrate_imu_camera --target ../april_6x6_80x80cm.yaml --cam camchain-..dataset-calib-cam1_512_16.yaml --imu ../imu.yaml --bag ../dataset-calib-imu3_512_16.bag --dont-show-report > out_imu.txt 2>&1

cd ..

done

Example of camera calibration for the Double Sphere paper dataset (results):

#!/bin/bash

MODELS=(

omni-none

omni-radtan

ds-none

eucm-none

pinhole-equi

)

BAGS=(

BF2M2020S23-1

BF5M13720-1

BM4018S118-1

BM2820-1

GOPRO-1

BF2M2020S23-2

BF5M13720-2

BM4018S118-2

BM2820-2

GOPRO-2

BF2M2020S23-3

BF5M13720-3

BM4018S118-3

BM2820-3

GOPRO-3

EUROC

)

MAX_JOBS=8

parallel () {

while [ "$(jobs | wc -l)" -ge $MAX_JOBS ]

do

wait -n

done

"$@" &

}

mkdir ds

cd ds

wget https://vision.in.tum.de/_media/data/datasets/visual-inertial-dataset/april_6x6_80x80cm.zip

unzip april_6x6_80x80cm.zip

for b in "${BAGS[@]}"; do

wget https://cdn3.vision.in.tum.de/double-sphere/dataset1/${b}.bag

done

for b in "${BAGS[@]}"; do

mkdir $b

cd $b

for m in "${MODELS[@]}"

do

mkdir ${m}

cd ${m}

parallel kalibr_calibrate_cameras --bag ../../${b}.bag --topics /cam0/image_raw --models ${m} --target ../../april_6x6_80x80cm.yaml --dont-show-report > calibration_out.txt 2>&1

cd ..

done

cd ..

done

Note: Kalibr fails to calibrate pinhole-equi on the TUMVI calibration sequence, which might be a limitation of the model implementation by Kalibr. First, Kalibr implements Kannala-Brandt (which corresponds to pinhole-equi in Kalibr) as pinhole+distortion, which limits it to below field-of-view below 180 deg. The tested lens has 190 deg FOV. Second, Kalibr does initialization by optimizing with a single image, which may result in divergence when the parameters are not well constrained by a single image. We found with our own calibration tool that the Kanala-Brandt model can fit the lens quite well.