Rolling-Shutter Visual-Inertial Odometry Dataset

Contact : David Schubert, Nikolaus Demmel, Lukas von Stumberg, Vladyslav Usenko.

We present a novel dataset that contains time-synchronized global-shutter and rolling-shutter images, IMU data and ground-truth poses for ten different sequences.

Export as PDF, XML, TEX or BIB

Conference and Workshop Papers

2019

[]

Rolling-Shutter Modelling for Visual-Inertial Odometry , In International Conference on Intelligent Robots and Systems (IROS), 2019. ([arxiv])

Dataset

The full dataset can be found at: https://cdn3.vision.in.tum.de/rolling/

| Sequence | Bag | Euroc/DSO | Length [m] |

| Sequence 1 | dataset-seq1.bag | dataset-seq1.tar | 46 |

| Sequence 2 | dataset-seq2.bag | dataset-seq2.tar | 37 |

| Sequence 3 | dataset-seq3.bag | dataset-seq3.tar | 44 |

| Sequence 4 | dataset-seq4.bag | dataset-seq4.tar | 30 |

| Sequence 5 | dataset-seq5.bag | dataset-seq5.tar | 57 |

| Sequence 6 | dataset-seq6.bag | dataset-seq6.tar | 51 |

| Sequence 7 | dataset-seq7.bag | dataset-seq7.tar | 45 |

| Sequence 8 | dataset-seq8.bag | dataset-seq8.tar | 46 |

| Sequence 9 | dataset-seq9.bag | dataset-seq9.tar | 46 |

| Sequence 10 | dataset-seq10.bag | dataset-seq10.tar | 41 |

Calibration

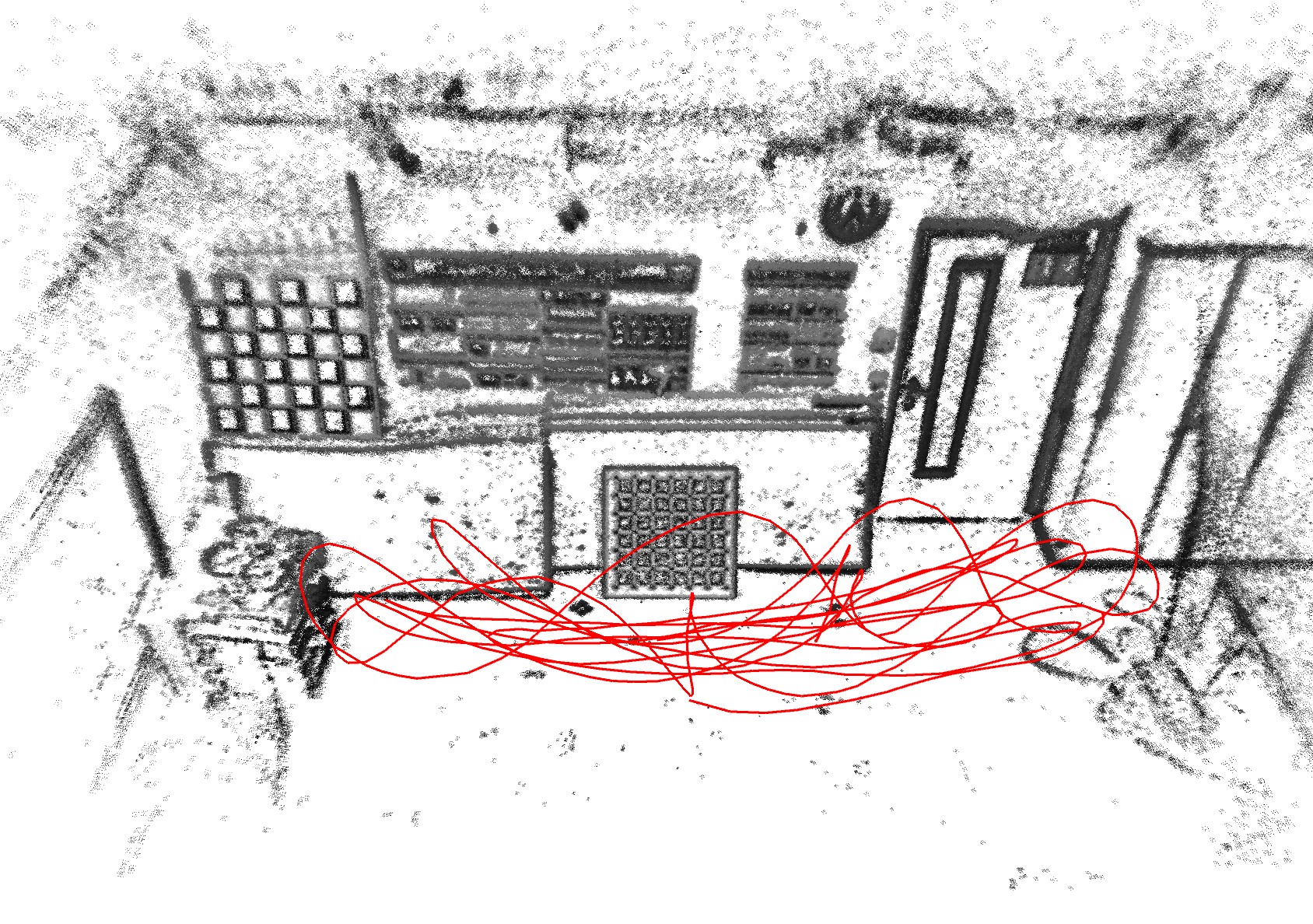

The figure shows approximate sensor orientations in xyz-rgb convention.

Note: this is an updated figure compared to the schematic illustration in the paper, which might have been confusing. Also, in the calibrated dataset, the offset between IMU and Marker reference frame has already been taken care of: the ground truth poses are post-processed to track the IMU frame.

For the calibrated sequences that are provided in the table the ground-truth poses are provided for the IMU coordinate frame and time-synchronized with image and IMU data. Geometric camera-IMU calibration can be found here: calibration.yaml.

Calibration was done using the following sequences.

| Sequence | Bag | Euroc/DSO |

| Camera calibration | dataset-calib-cam1.bag | dataset-calib-cam1.tar |

| IMU calibration | dataset-calib-imu1.bag | dataset-calib-imu1.tar |

Note that for the calibration sequences, both cameras were operating in global-shutter mode. This means the timestamps for the rolling-shutter images refer to the first row. In general, timestamps denote the middle of the exposure interval.

For more information about calibration, we refer to our visual-inertial dataset.

According to the camera manufacturer, the time difference of two consecutive rows due to rolling shutter can't be read directly, but is very well approximated by the step size of the exposure time. Like this, we obtain an approximate row time difference of 29.4737 microseconds.